On the dissemination/evaluation loop for Research Software

- Track: Open Research Tools and Technologies devroom

- Room: D.research

- Day: Saturday

- Start: 12:35

- End: 12:50

- Video with Q&A: D.research

- Video only: D.research

- Chat: Join the conversation!

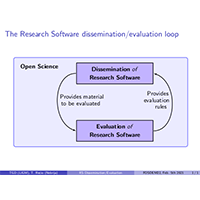

This talk explores the interconnections that links Research Software (RS) dissemination and evaluation issues, in the Open Science context, following the guidelines of the CDUR RS assessment protocol.

In our presentation of FOSDEM 2021:

Free/Open source Research Software production at the Gaspard-Monge Computer Science laboratory - Lessons learnt https://archive.fosdem.org/2021/schedule/event/openresearchgaspard_monge/

we have analyzed the evolution of several problems that rise when considering the Research Software (RS) production of a laboratory, and we have highlighted several issues that should be addressed to deal with these problems within the Open Science context. Among them we have called to the establishment of sound dissemination and evaluation procedures following the guidelines of the CDUR RS assessment protocol presented in:

Gomez-Diaz T and Recio T. On the evaluation of research software: the CDUR procedure. F1000Research 2019, 8:1353 https://doi.org/10.12688/f1000research.19994.2

CDUR comprises four steps that can be succinctly described as follows:

- Citation, to deal with correct RS identification,

- Dissemination, to measure good dissemination practices,

- Use, devoted to the evaluation of usability aspects, and

- Research, to assess the impact of the scientific work.

In this talk we would like to analyze more in depth RS dissemination and evaluation issues and the above mentioned protocols, referring, in particular, to how these protocols can be adapted to different situations that may appear in evaluation processes such as, for example, different evaluation contexts (career, review...).

We will also highlight the interconnections that link both dissemination and evaluation issues, as the RS dissemination needs to adjust to evaluation rules and only suitably disseminated RS (maybe in a restricted context) can be evaluated.

This is a collaboration work with Tomas Recio, Professor at the University Antonio de Nebrija (Madrid).

Speakers

| Teresa Gomez-Diaz |

Attachments

Links

- Free/Open source Research Software production at the Gaspard-Monge Computer Science laboratory - Lessons learnt (FOSDEM 2021)

- Gomez-Diaz T and Recio T. On the evaluation of research software: the CDUR procedure. F1000Research 2019, 8:1353

- Gomez-Diaz T and Recio T. Towards an Open Science definition as a political and legal framework: on the sharing and dissemination of research outputs

- Video recording(WebM/VP9)

- Video recording(mp4)

- Chat room (web)

- Chat room (app)

- Hallway chat room (web)

- Hallway chat room (app)

- Submit feedback